I Built a Personalized AI News Podcast in 6 Hours

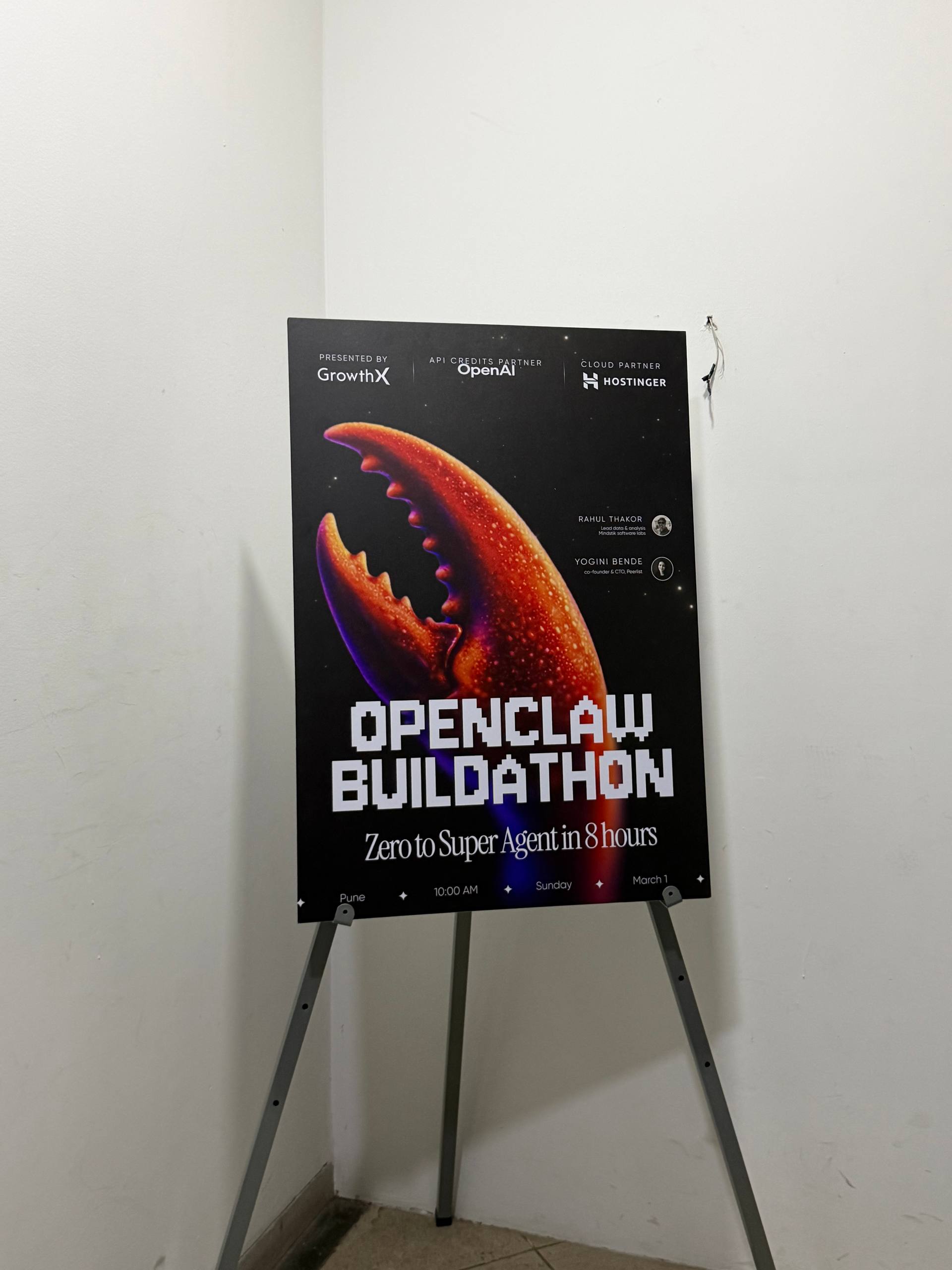

Honored to be part of India's first OpenClaw Buildathon.

GrowthX organized it across 5 cities. 25 builders selected per city, 6 hours to build, then you stand up and demo what you made. The Pune edition was hosted at One2N.

This was my first buildathon. I picked the Hard tier.

The Problem

Every morning I open 5 news apps. Scroll for 20 minutes. Still miss half the stuff that matters to me. Tech launches, India policy changes, geopolitical shifts. It's all scattered across different apps, different formats, different algorithms deciding what I should care about.

I wanted something that does the scrolling for me and turns it into audio I can listen to while getting ready.

What I Built

The Morning Scroll. A personalized AI news podcast that shows up in my Telegram every morning.

Here's what it does:

1. Scrapes the web for stories I care about. Tech, geopolitics, India news, product launches, community buzz. Not just headlines. It pulls context.

2. Writes a two-host conversational script. Josh and Rachel. Back-and-forth banter. Not a monotone news reader. Actual conversation with reactions, follow-ups, the occasional hot take.

3. Generates speech with ElevenLabs. Two distinct voices using eleven_turbo_v2_5. I spent a chunk of time tuning the voice settings. The default stability (0.5) sounds flat and robotic. Dropping it to 0.28 with similarity_boost at 0.82 and style at 0.45 made it sound way more natural. That tuning alone probably took an hour.

4. Produces mood-matched music beds. This is the part I'm most proud of. Each segment gets its own background music generated by ElevenLabs Music API, matched to the mood of the content:

- Tech news gets upbeat electronic

- Geopolitics gets tense, cinematic stuff

- India stories get hopeful fusion

5. Mixes everything with ffmpeg. Voice audio + music bed, ducked to 15% volume during speech and 35% during intros/outros. Then all segments get concatenated into one episode.

6. Drops it into Telegram at 7 AM via cron. No app to open. No feed to scroll. Just hit play.

Listen to the demo

This is the actual output from the buildathon. Two voices, background music, 3 minutes 20 seconds. Visualized with word-by-word transcript sync.

The Episode Structure

I didn't want a flat list of news items read one after another. That's boring. The episode has actual segments:

- Cold Open

- The Briefing (3-4 main stories)

- Number of the Day

- One Big Idea

- Hot Take

- Trivia Drop

- One Thing to Try

- Closing

Target length: 5-7 minutes. Enough to cover while brushing your teeth and making chai.

The Hard Parts

None of the individual pieces are complicated. Web search, TTS, music generation, audio mixing, cron scheduling. All well-documented, all have APIs. The hard part was getting them to work together in 6 hours.

A few things that bit me:

ElevenLabs Music API duration is unreliable. I asked for 30 seconds of music, got 150 seconds. Asked for 240 seconds, also got 150 seconds. The duration_seconds parameter is more of a suggestion than a constraint. Had to trim everything with ffmpeg afterwards.

Rate limits on parallel music generation. Tried generating 3 music beds at once. Got 429'd immediately. Had to stagger them with delays.

Voice stability matters more than you'd think. Default ElevenLabs settings sound like a bored news anchor. You need to push stability down and style up to get anything that sounds like a real conversation. I went through maybe 15 test generations before landing on settings that worked.

ffmpeg audio ducking is fiddly. Getting the music volume right relative to speech is a feel thing. Too loud and it's distracting. Too quiet and it might as well not be there. 15% during speech, 35% during transitions landed well.

The Stack

- OpenClaw as the orchestration layer (skills, cron, Telegram delivery)

- Web search for content sourcing

- ElevenLabs TTS (

eleven_turbo_v2_5) for voice generation - ElevenLabs Music API for mood-matched background music

- ffmpeg for audio mixing and concatenation

- Cron for daily 7 AM delivery

The Demo

6 hours in, I hit play in front of 25 people. Two distinct voices having an actual conversation about that morning's news, with music underneath that matched the mood of each segment.

It wasn't polished. The transitions were rough, the episode ran a bit long, and the music beds sometimes clashed with the voice audio. But it worked. It sounded like a real podcast, not a text-to-speech dump.

What's Missing (for now)

This was a 6-hour build, not a production system. A few things I'd add if I take this further:

- Telegram bot handlers for subscribing, setting preferences, picking topics

- Episode length targeting so it actually hits 5-7 minutes consistently

- Better content curation with user feedback loops

- Intro/outro jingles instead of generated music for those segments

- Multiple language support (Hindi would be obvious)

The Best Part

The demo circle at the end. 25 people who'd been heads-down building for 6 hours, all showing what they made. Completely different projects, same platform. That energy is hard to find outside of events like this.

Thanks to GrowthX and One2N for putting this together. India's first OpenClaw Buildathon. Hopefully not the last.